In the world of data science, not every boundary is sharply drawn. Think of a painter blending colours on a canvas — blue slowly merging into green, with no clear line separating them. Similarly, in real-world datasets, groups of data points often overlap, making it difficult to define where one cluster ends and another begins. This is where the Expectation-Maximisation (EM) algorithm comes in — a statistical algorithm that helps separate blended patterns through an iterative process.

The Need for Soft Clustering

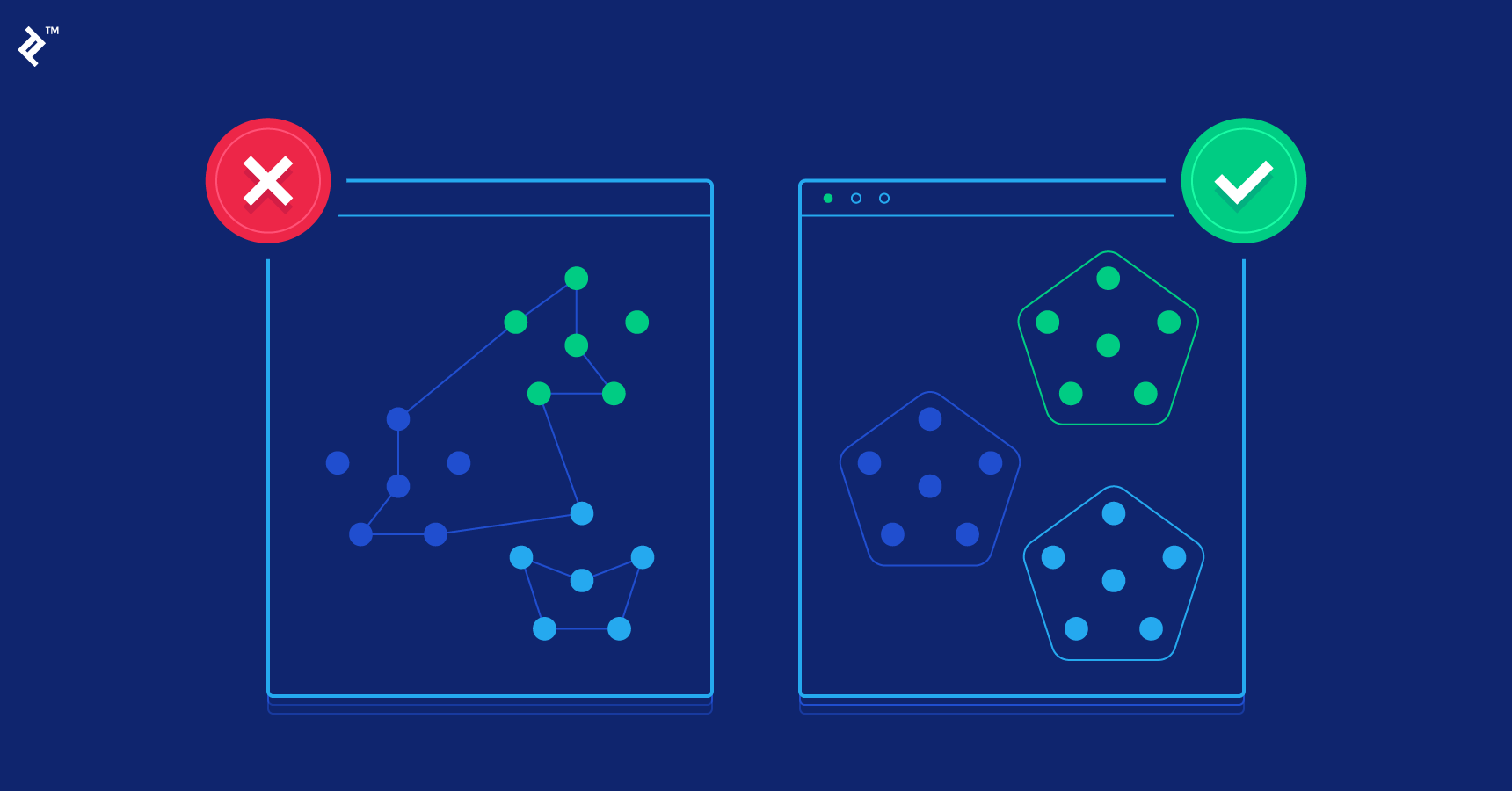

Most clustering techniques, like K-Means, assign each data point to a single cluster — as if every shade of colour belonged only to one family. But life rarely fits into neat boxes. Customers may exhibit traits of multiple segments, or genes may express characteristics that belong to more than one biological process.

EM addresses this ambiguity with soft clustering, allowing each data point to have varying degrees of membership across multiple clusters. This method aligns better with how the real world behaves — full of overlaps and uncertainties rather than clear-cut divisions.

For learners exploring statistical methods, a data science course offers a solid foundation for understanding the mathematics that drives algorithms like EM, transforming abstract theory into practical analytical skills.

The Intuition Behind EM

Imagine you’re blindfolded in a room filled with several musical instruments playing simultaneously. You can hear all the sounds, but don’t know which notes belong to which instrument. The EM algorithm acts as your guide — helping you gradually distinguish the different instruments by refining your guesses over time.

This algorithm works through two key steps:

- Expectation (E-step): Estimate the probability that each data point belongs to each cluster based on current model parameters.

- Maximisation (M-step): Update the parameters (like means, variances, and weights of clusters) to maximise the likelihood of observing the data given these probabilities.

This process repeats iteratively, alternating between estimating probabilities and refining parameters until the model converges — meaning it no longer changes significantly between iterations.

Gaussian Mixture Models: A Flexible Representation

The EM algorithm is most famously applied in Gaussian Mixture Models (GMMs), where data is assumed to come from a combination of several Gaussian (normal) distributions. Each distribution represents a cluster, and EM estimates the parameters of these Gaussians to best describe the data.

Think of this as trying to model a melody composed of multiple instruments playing together — EM helps isolate the sound of each instrument, even when their frequencies overlap.

This approach is especially powerful in areas like image segmentation, customer segmentation, and anomaly detection — where boundaries are fluid, and uncertainty is part of the challenge.

Students undertaking a data science course in Mumbai often get hands-on experience with such models, learning to balance statistical precision with computational practicality.

The Mathematics of Iteration

At its core, EM is an optimisation algorithm that maximises the likelihood function — a mathematical measure of how well a set of parameters explains the observed data. However, unlike traditional optimisation, EM doesn’t have direct access to which cluster each data point belongs to.

It compensates for this missing information by alternating between estimating probabilities (soft assignments) and refining model parameters. Each cycle improves the likelihood until the process stabilises at a local maximum — the best explanation for the given data under the assumptions made.

This elegant dance of estimation and optimisation makes EM a cornerstone technique in probabilistic modelling.

Applications Across Industries

From finance to healthcare, EM plays a crucial role wherever patterns are hidden beneath uncertainty. In marketing, it helps group customers based on behaviour when boundaries between segments aren’t distinct. In medicine, it can cluster patient profiles even when symptoms overlap.

Because it provides probabilistic assignments, EM doesn’t just categorise — it quantifies confidence levels. This makes it invaluable in risk assessment, where understanding degrees of belonging can guide better decision-making.

For analysts or students pursuing a data science course in Mumbai, mastering EM offers insight into how uncertainty can be harnessed rather than feared — turning ambiguity into actionable intelligence.

Conclusion

The Expectation-Maximisation algorithm stands as a bridge between clarity and chaos. It doesn’t demand perfect information; instead, it thrives in the unknown, iteratively refining its understanding until order emerges from complexity.

Just as a painter layers colours to create depth, EM layers probabilities to reveal structure in data that seems unstructured. For those eager to explore this interplay between art and analytics, enrolling in a data science course can illuminate how mathematics and intuition converge — transforming raw data into elegant, interpretable patterns.

Business name: ExcelR- Data Science, Data Analytics, Business Analytics Course Training Mumbai

Address: 304, 3rd Floor, Pratibha Building. Three Petrol pump, Lal Bahadur Shastri Rd, opposite Manas Tower, Pakhdi, Thane West, Thane, Maharashtra 400602

Phone: 09108238354

Email: enquiry@excelr.com